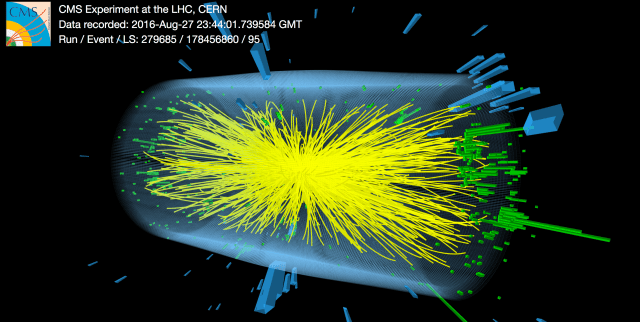

Look at the image above. Every yellow track you see is a charged particle that survived long enough, and decayed into something stable enough, for the detector to reconstruct it. The collision itself — the primary event, at an effective temperature roughly thirteen orders of magnitude above the detector’s operating environment, in effective temperature terms — is not directly visible. What is visible is a projection: the subset of the event’s products whose spectral support overlaps with what the detector is built to see.

Much of what lies outside the detector’s reconstruction regime is treated operationally as noise, calibration residual, or simply remains unreconstructed.

In this programme, the transforms are treated as the physics target rather than merely a calibration problem.

The search for the needle is not a brute-force scan of a galaxy. It is a constrained inverse problem over regions where our current pipelines have learned to stop looking.

The Thermal Gradient as Physical Object

A TeV-scale collision occurs at an effective temperature of 1013–1016 K. The detector that records it operates near or below room temperature — a gap of thirteen or more orders of magnitude. Standard reconstruction treats this gap as an engineering inconvenience: calibrate it, correct for it, close it as a systematic error budget line, and move on.

We propose a different reading. The thermal gradient between event and detector is not noise to be suppressed. It is a sequence of chart transitions — each one a transform linking an upstream observational regime to a downstream one — and those transforms carry physical information that current pipelines systematically discard.

The displaced secondary vertex visible in some event displays is one familiar example of how upstream processes can become detector-accessible only after intermediate transformations and decays. The calibration pipeline that makes the image intelligible is performing a layered reduction across these regimes. The question this programme asks is whether that reduction is discarding structured information along with the noise.

What if we kept it instead?

The Binary Search Protocol

This is not a proposal to instrument every point along the thermal gradient simultaneously. It is a proposal to treat the gap as a search space and apply the oldest efficient search strategy available: bisection.

Place sensors — or repurpose existing detector layers — at the midpoint of the accessible temperature range. Compare the signal structure above and below that midpoint: an A/B test between adjacent charts. Where the two charts agree, the transform is locally trivial. Where they disagree in structured ways, the transform carries information. Bisect again toward the disagreement. Repeat.

This is not a blind search. The starting points are already known: frequency bands or diagnostic channels often treated operationally as beam-related noise, the radial distances from the vertex where shower models require large corrections, the timing windows discarded as pre-pulse or post-pulse artifact, the calibration residuals that never fully close. Each of these is a location where the pipeline encountered something it could not classify and chose to minimize. In binary search terms, the bug is already partially localized.

Version 12 of the Manifold Relativity series sharpens this protocol into a falsifiable prediction (P23): for two co-located detectors at different effective temperatures, any residual reconstruction disagreement should be tested against a thermal information-geometric separation measure, for which the Fisher information distance is a computable first proxy. The first-sensitive variables are identified: timing resolution, spectral channel fragmentation, inferred resonance lifetimes. If the residual is consistent with zero across all accessible temperature separations, the chart-mismatch mechanism is falsified.

| Regime | Candidate sensor | Status |

|---|---|---|

| Hot end — near collision native chart | Diamond pixel detectors; transition radiation detectors | Existing or mature detector classes; reinterpretation and/or protocol changes required |

| Intermediate — cascade midpoints | Prompt gamma calorimetry at staged radii | Incremental instrumentation |

| Cool end — near detector native chart | Microwave waveguide / diode; RF beam monitors | Existing infrastructure; currently filtered as beam noise |

The HPAIC Computational Architecture

The signal analysis problem this protocol generates is not incrementally harder than what current pipelines handle. It is categorically different. Standard reconstruction looks for known particles in well-characterized detector responses. This programme looks for structured information in what every existing pipeline has been trained to classify as noise, across thirteen orders of magnitude of spectral separation, using inter-chart transforms that have never been formally characterized.

That requires a different computational architecture. The HPAIC framework is proposed as a candidate constrained inverse-problem architecture built from established mathematical families. It is not a proven engine. The specific transform family linking the W-manifold’s spectral structure to detector observables remains the missing theoretical ingredient — the necessary precondition for making these methods scientifically decisive rather than merely suggestive.

Subject to that constraint, the proposed four-layer mathematical stack is:

Layer 1 — Multiscale Flow Reasoning

Borrowing renormalization-group-like intuition as an analogy for structured cross-scale inference — not asserting that standard RG flow applies directly, but using its conceptual machinery to describe how structure evolves across adjacent spectral regimes. (V12 formally indexes the question of whether the vertical comparison maps constitute a Wilsonian RG flow as Open Problem O36.)

Layer 2 — Inverse-Operator Methods

Adjoint sensitivity analysis and backpropagation-style gradient refinement to propagate constraints backward through the transform hierarchy — not to guarantee unique recovery of hidden source structure, but to rank candidate upstream structures consistent with observed downstream residuals.

Layer 3 — Sparse Recovery

Compressed sensing applied to signals that may be weak, intermittently expressed, or present only in specific chart transitions — structure that standard averaging and integration windows systematically wash out.

Layer 4 — Graph-Based Propagation Analysis

Treating the detector cascade as a propagation network rather than a linear reduction pipeline — allowing anomalous flow between regimes to appear as signal rather than as reconstruction failure.

Established mathematics already offers these candidate tools. What is still missing is a sufficiently explicit forward transform family to make them scientifically decisive. The HPAIC architecture does not replace that theoretical work. It is candidate machinery that becomes useful once even a provisional transform family is available.

Because unique recovery should not be assumed, Bayesian inversion is part of the candidate toolkit as well: the aim is to rank plausible hidden structures and transform families, not to claim a single guaranteed reconstruction.

We do not yet possess the full transform family, but we already know the mathematical classes likely needed to search for it.

Falsifiability

The experimental programme has a clean falsifiability profile. Each A/B test between adjacent sensor layers either finds structured inter-chart residuals or it does not.

If the inferred inter-chart transforms are physically trivial across the tested regimes — mere rescalings, no stable structure, no anomalous flow — the programme’s experimental motivation weakens substantially. If they reveal stable structured residuals now discarded by standard reconstruction, then the case for nontrivial cross-chart structure strengthens and part of the phenomenon has been hiding inside the reduction pipeline.

Either result is scientific progress. The binary-search strategy is intended to force a discriminating outcome, whether that outcome strengthens or weakens the programme’s motivation.

The places most often treated as calibration loss, nuisance residuals, or detector noise may instead contain information about nontrivial transforms between observational regimes. The HPAIC framework preserves and interrogates those residuals rather than compressing them away. In this programme, the transforms are not bookkeeping. They are the target.

Update (v12–v13) Since this post was first published, the Manifold Relativity series has advanced. Version 12 proves three structural propositions (nested accessibility, identity limit, matched-chart consistency), states the P23 chart-mismatch residual as a sharpened falsifiable prediction with explicit null-result conditions, and provides falsification criteria for the programme. Version 13 performs the programme’s first computations, establishing that spectral truncation of product thermal states produces sub-additive entropy composition, and derives an exact analytical formula for the truncation-induced mutual information (Proposition 2.8). A computational addendum preserving the full evidence trail — including epistemic corrections and negative findings — accompanies the v13 preprint.

This post is a related note to the Manifold Relativity preprint series. The formal chart-matching framework, spectral filter ΠT, observer-filter map OT, structural propositions (v12), sub-additivity computation (v13), and the open problems O33, O36, O37–O39 are discussed more formally in the

Manifold Relativity preprint series (v1–v13).

There is obviously a transition from one mapping to another and back that lasts just femtoseconds which is not the same as doing such within a native mapping. V12’s matched-chart consistency theorem (Theorem 3.6) and v13’s space-time complementarity corollary establish the structural framework for this: chart transitions have a well-defined ordinary limit where they become trivial, and the novel physical content resides in the mismatch regime. A future version may address the specific transform families governing these transitions at large spectral separation. In summary, the goal is to do for chart transitions what relativity did for reference-frame changes: make comparison lawful without assuming all observers inhabit the same accessible regime.

Paul E. Sorvik · Manifold Relativity Programme · Alexandria, Egypt

ORCID: 0009-0008-5717-7110

paulsorvik.wordpress.com

Hero image: CMS/CERN, Run 279685 / Event 178456860, 13 TeV, 2016-Aug-27. Used under CERN open licence with attribution.

You must be logged in to post a comment.